In an innovation management ecosystem like Wazoku, which is inundated with data and complexity, there is a significant opportunity to revolutionize how challenges are addressed, ideas are fostered, and solutions are discovered by accessing and utilizing data effectively. Within this context, there is valuable potential to streamline procurement processes, find the right partners or collaborators, leverage existing solutions, and gain invaluable insights. Wazoku is a highly configurable innovation engine offering a suite of functions designed to meet diverse innovation management needs.

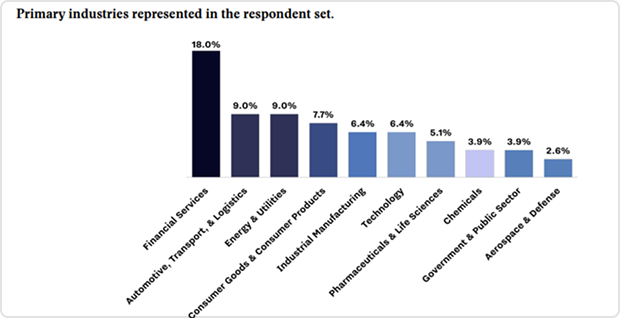

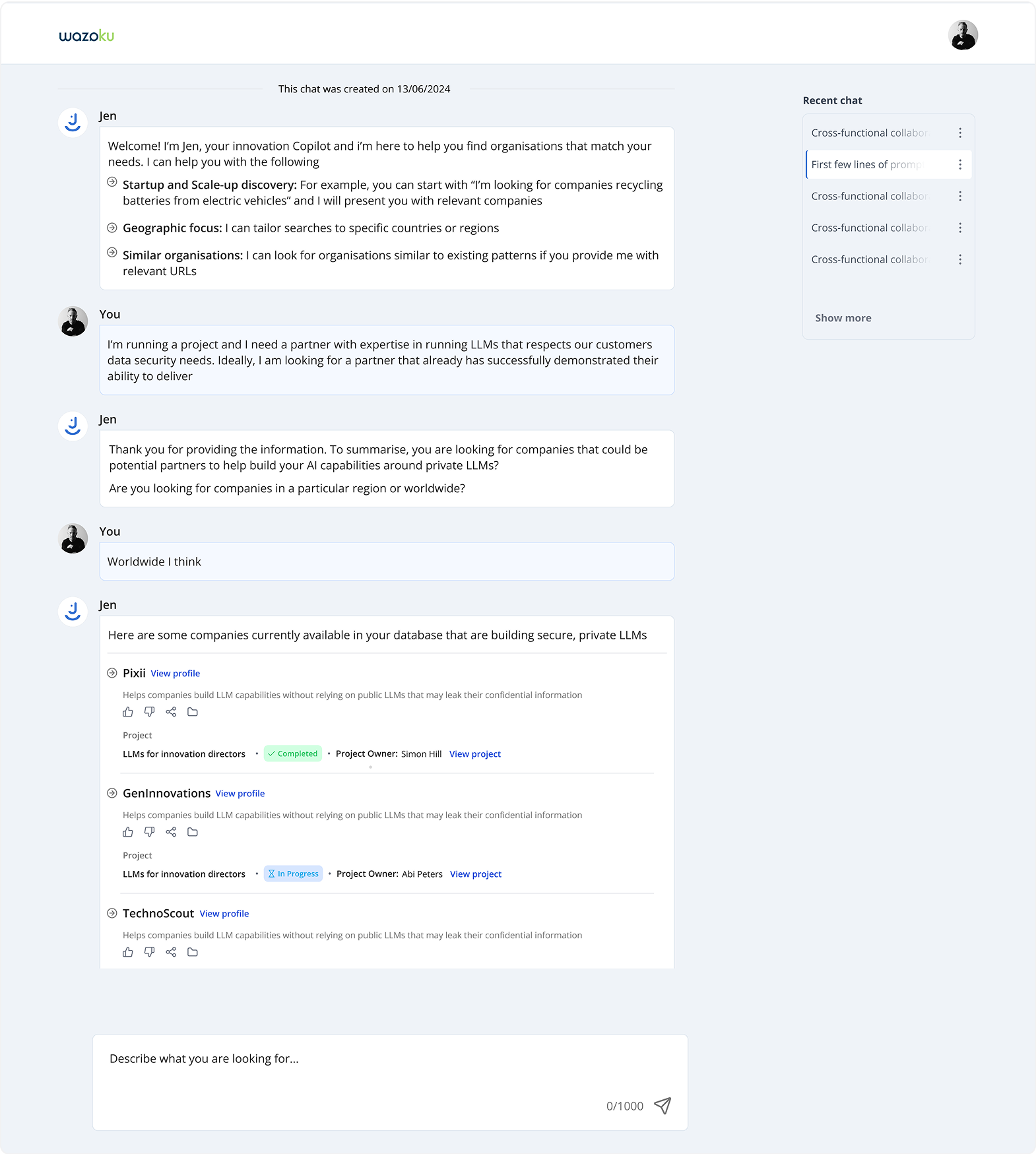

Companies often face challenges in finding suitable partners or technologies to implement evaluated ideas efficiently. To expedite innovation, they seek to connect with the right startups or technology providers that can support clusters of gathered ideas.

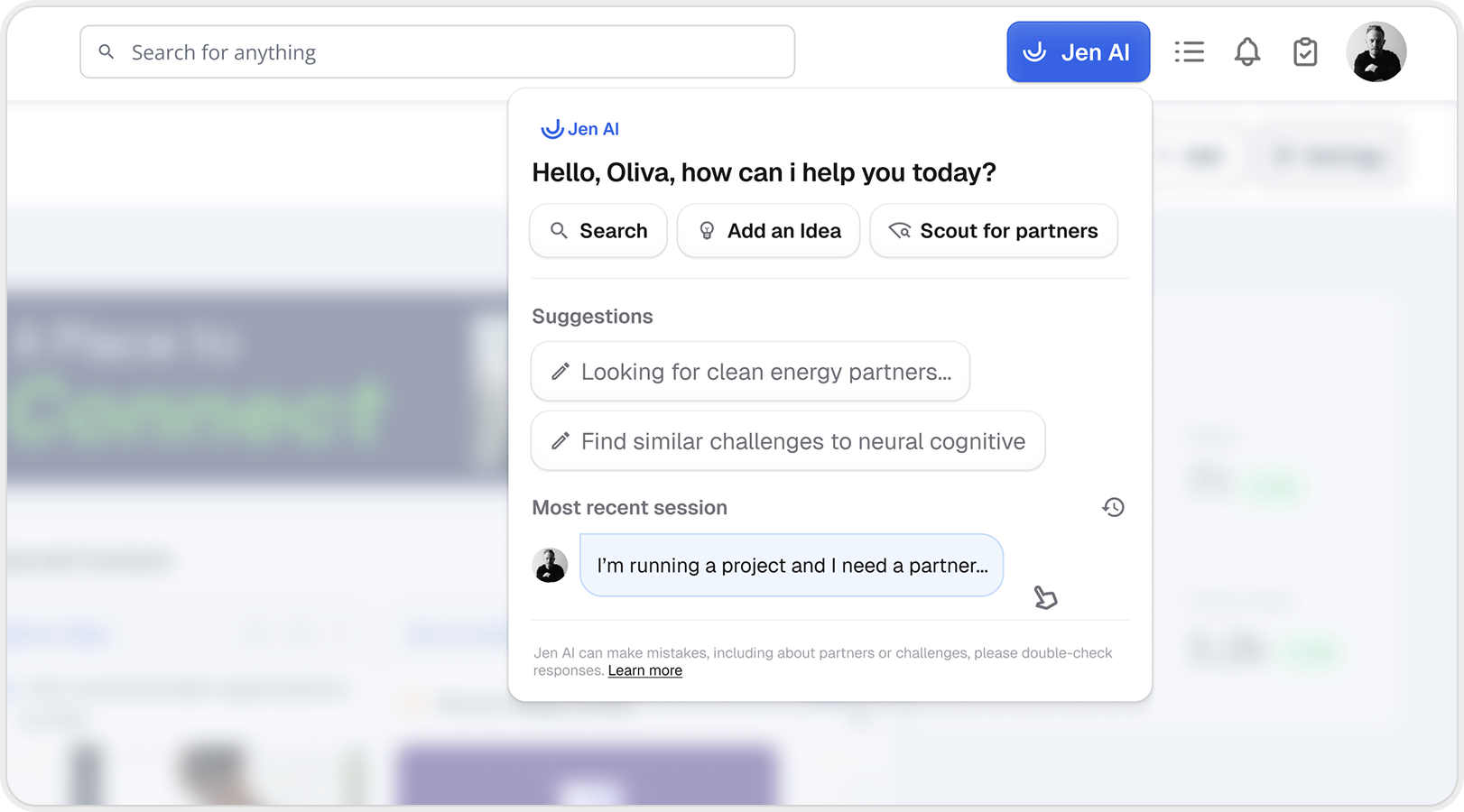

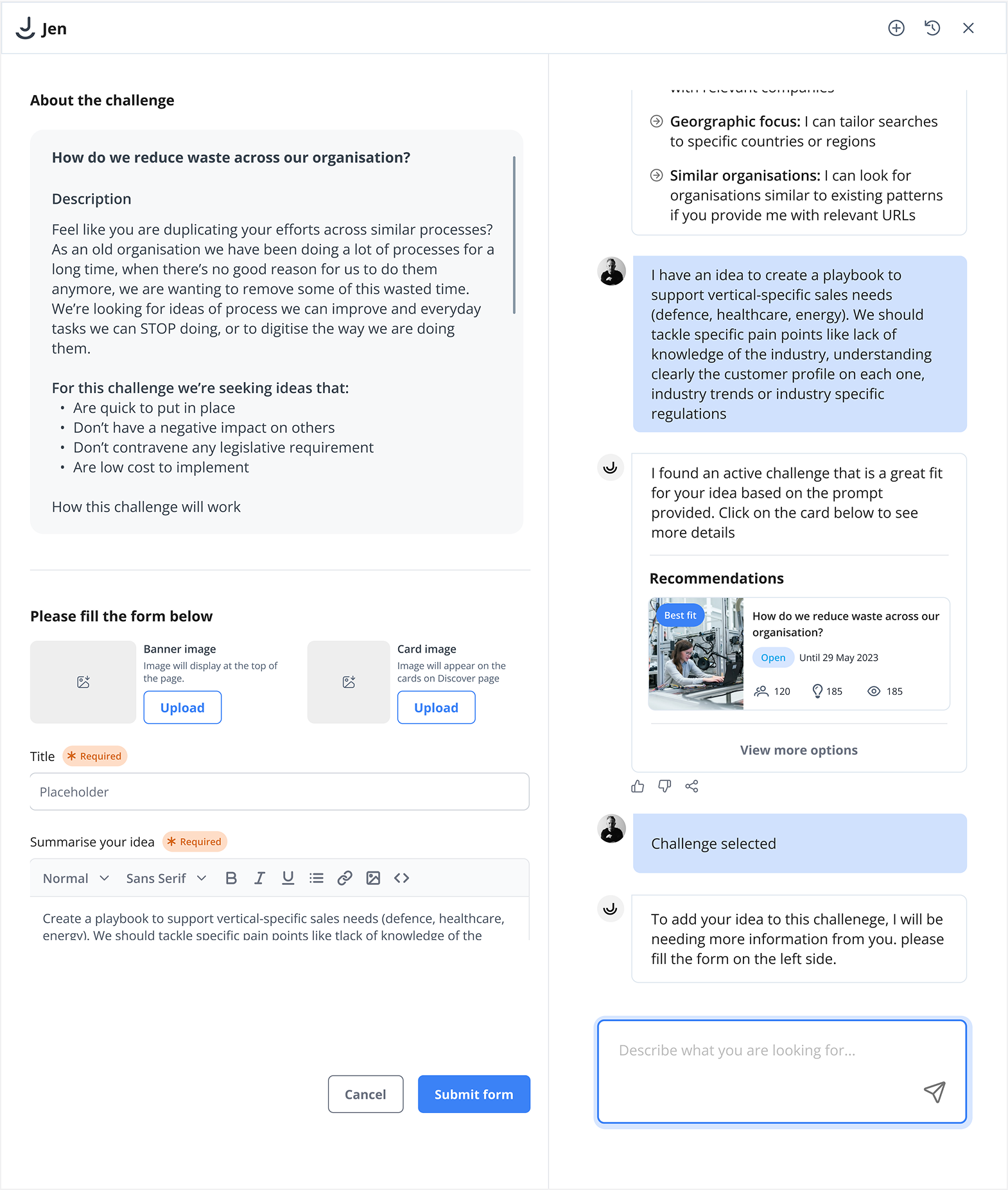

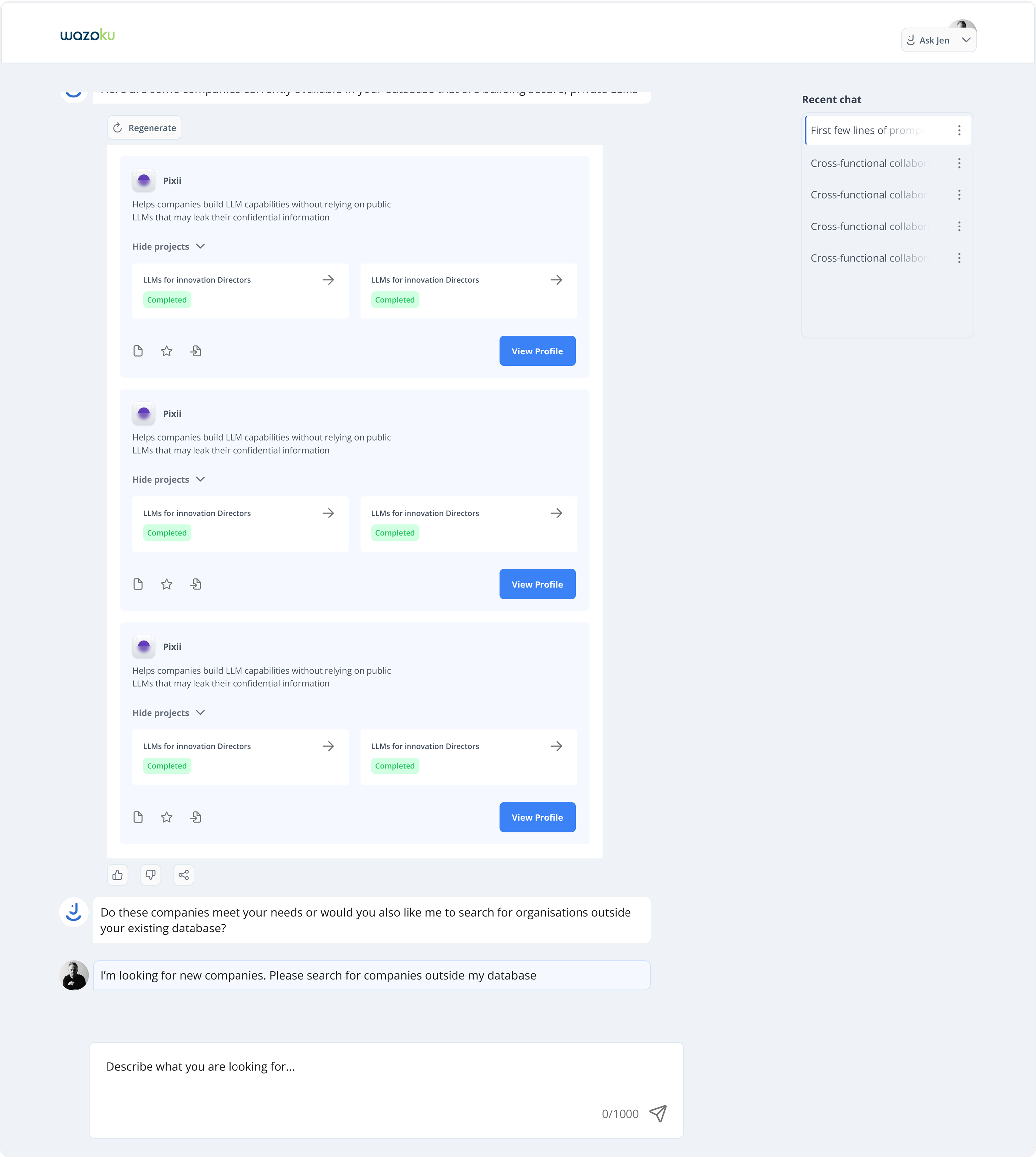

Adopting an AI-enabled approach to scouting: Building on the success of CoPilot assistant to help companies easily identify suitable partners for diverse campaigns, clusters of ideas and requirements, ultimately saving time, and resources. The outcome led to a reduction in traditional form of scouting but opening a world of possibilities for human + machine intelligence, channeling their productivity to achieve faster results.

Adopting an AI-enabled approach to scouting: Building on the success of CoPilot assistant to help companies easily identify suitable partners for diverse campaigns, clusters of ideas and requirements, ultimately saving time, and resources. The outcome led to a reduction in traditional form of scouting but opening a world of possibilities for human + machine intelligence, channeling their productivity to achieve faster results.

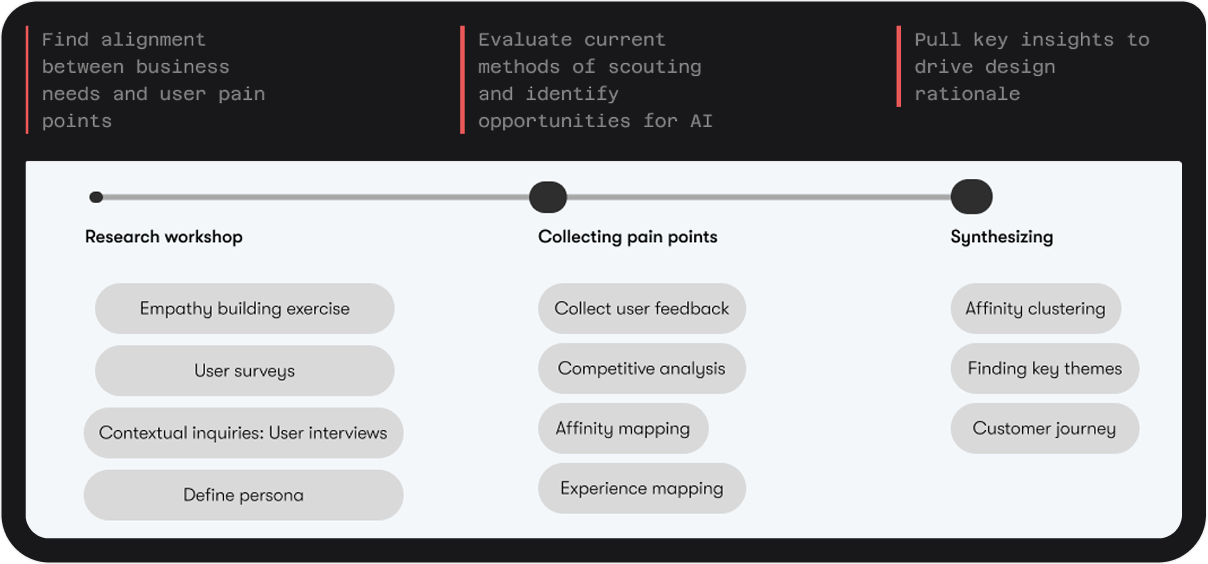

I began by thoroughly understanding the needs of our users by organising a working group of both internal stakeholders and external partners seeking collaboration opportunities. Through these working groups and feedback sessions, I gained insights into the expectations and the challenges they faced in finding partners to implement ideas.

Also I leverage insights from the MVP (Copilot), refined our understanding of user behaviour and preferences. This informed my approach to designing Jen

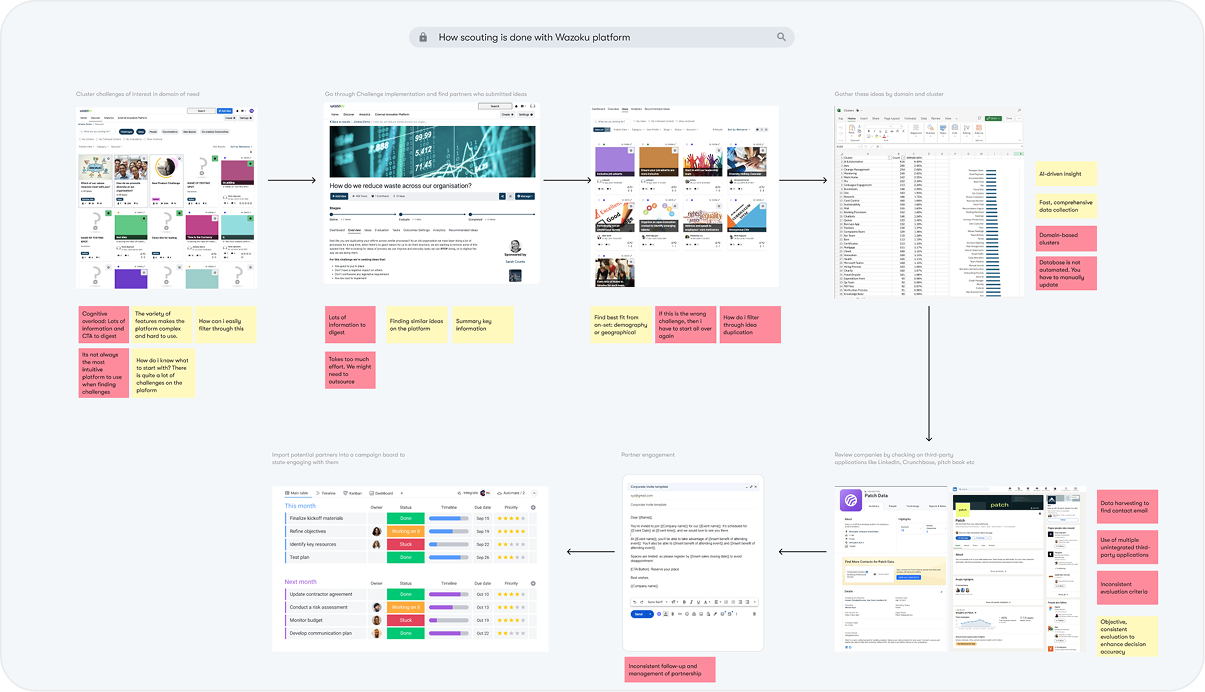

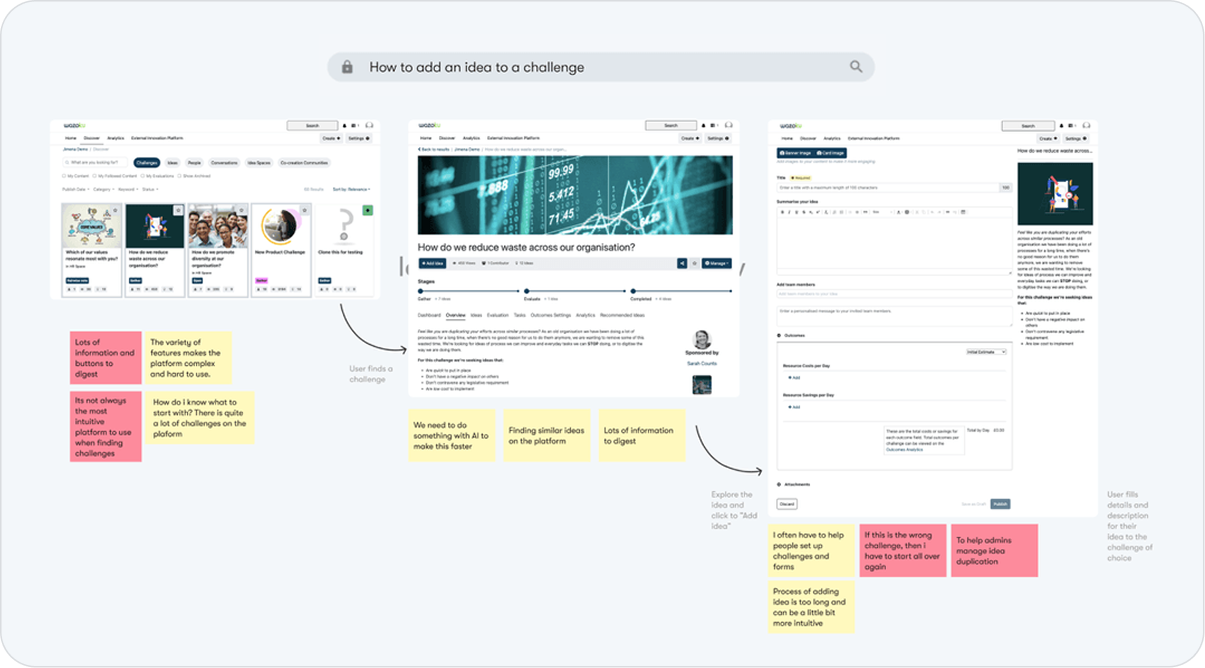

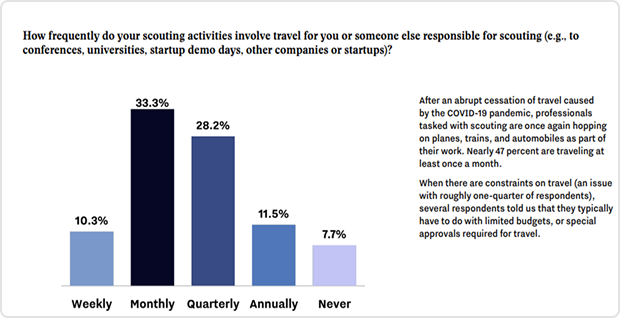

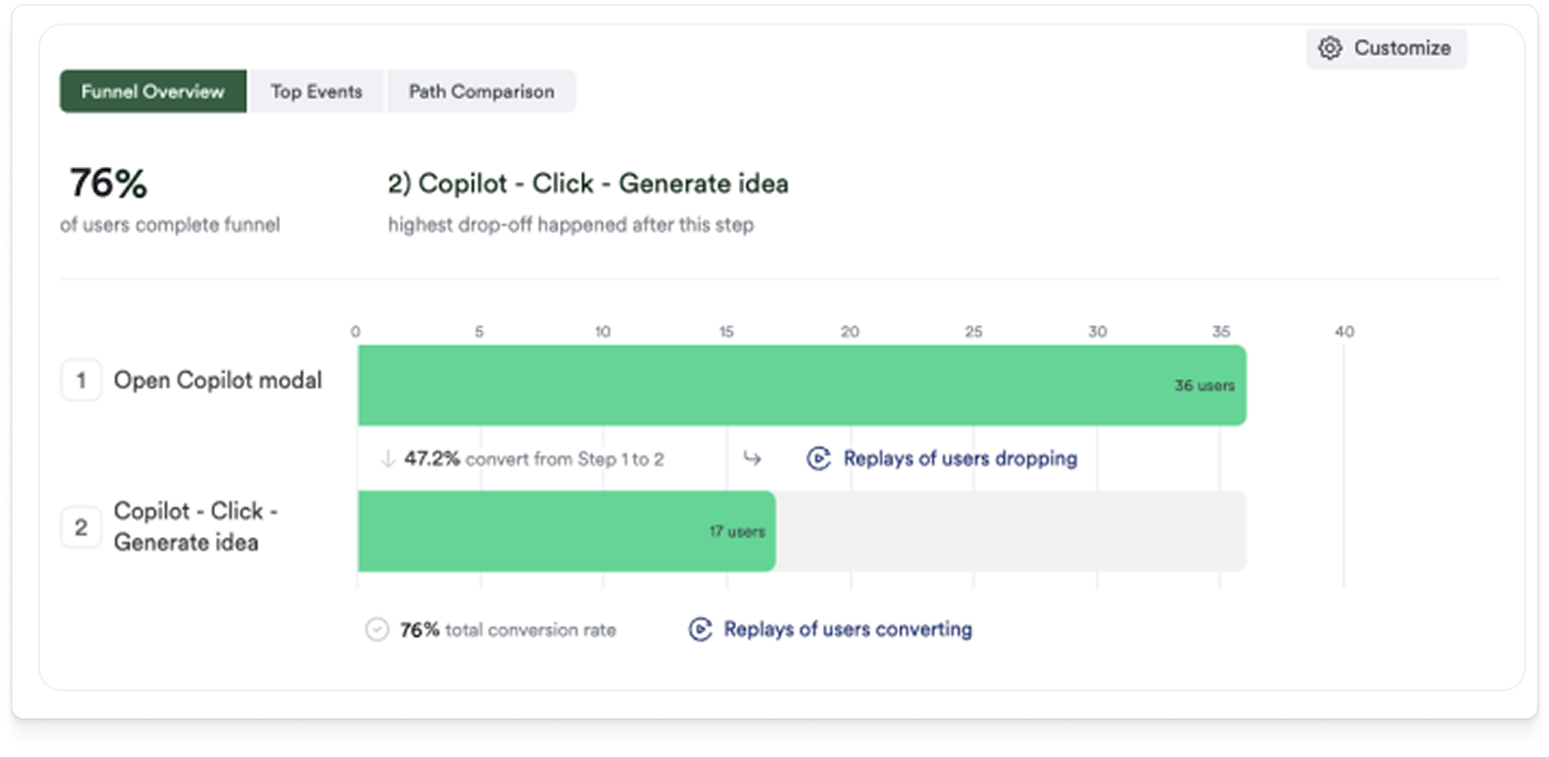

Lots of users drop-off and decide to outsource the process

Companies struggle with clustering very similar ideas of innovators/partners who submitted within the same challenge

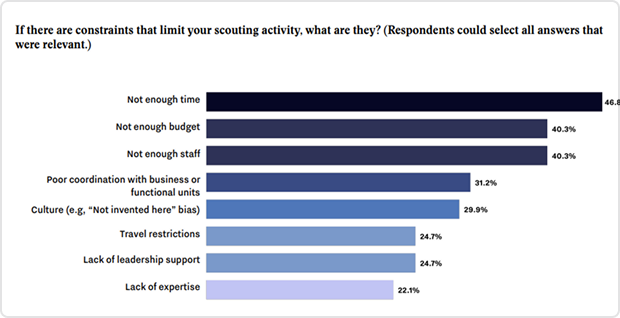

Unspecified time for scouting process. It could take between 90-360 days for traditional scouting and this is not cost effective for any company

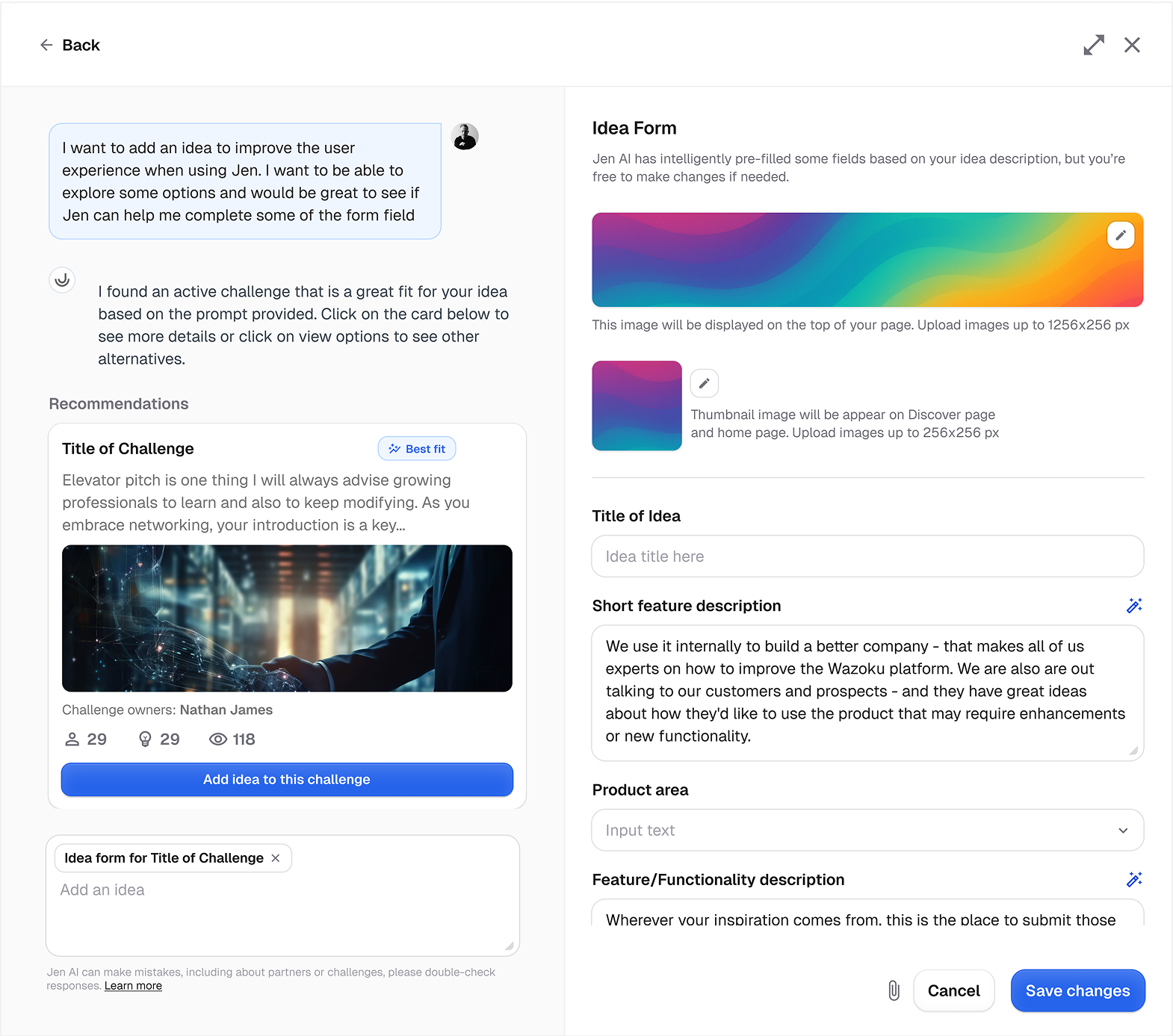

To align the pain points with business objectives, I guided stakeholders toward a deeper understanding of user needs. Together, we identified five key pillars essential for driving AI adoption—validating it as the right path for enabling scouting-on-demand.

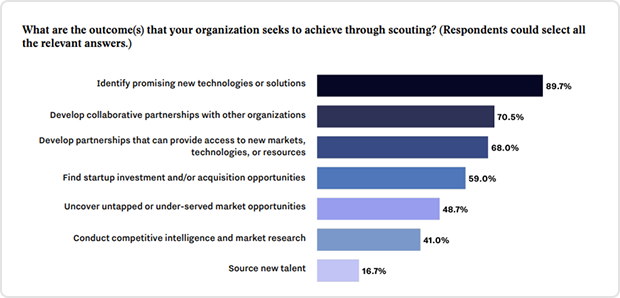

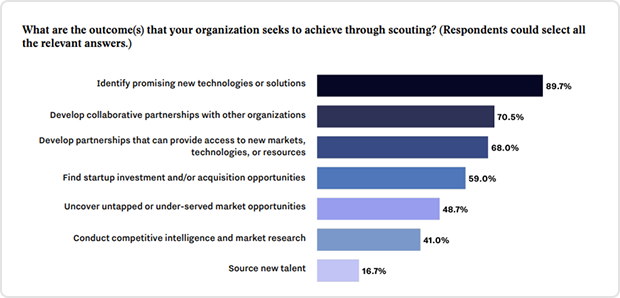

Scouting experience should require little investment (time, budget and human resource)

Designing an effective workflow, moving around several products and integrating with third-party apps can be frustration

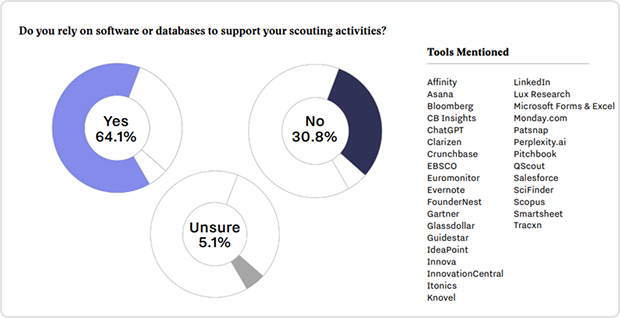

Incorporated extra tools not integrated to Wazoku for scouting is quite frustrating for them with lots of information sharing within third-party sources

Scouting that allows coordination and sharing

Companies want to be able to find partners and provide real-time context to enhance their search experience

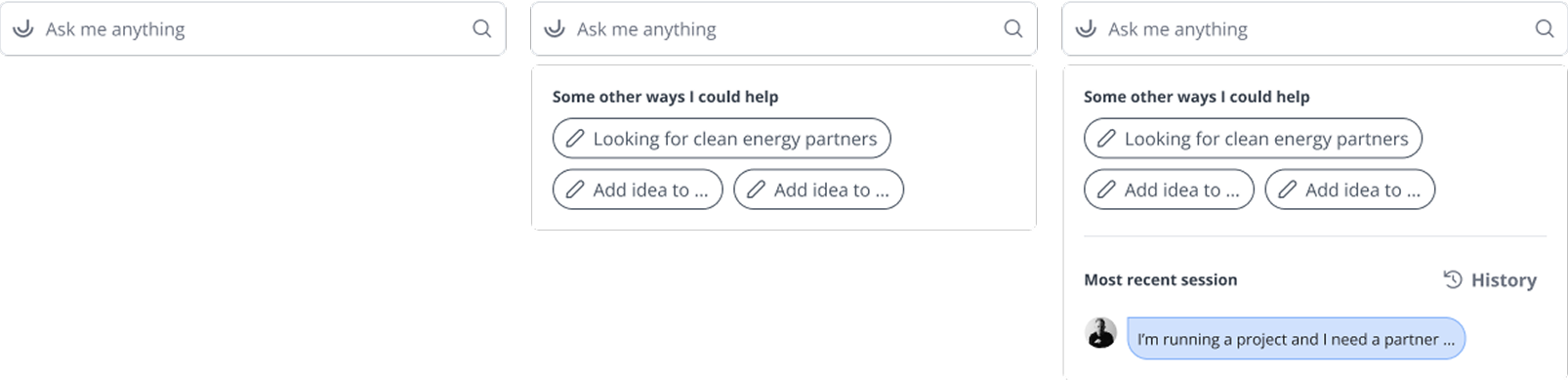

By leveraging familiarity bias, I spent time observing the design of competing products. My goal was to create a product that would require no learning curve, thus promoting better adoption.

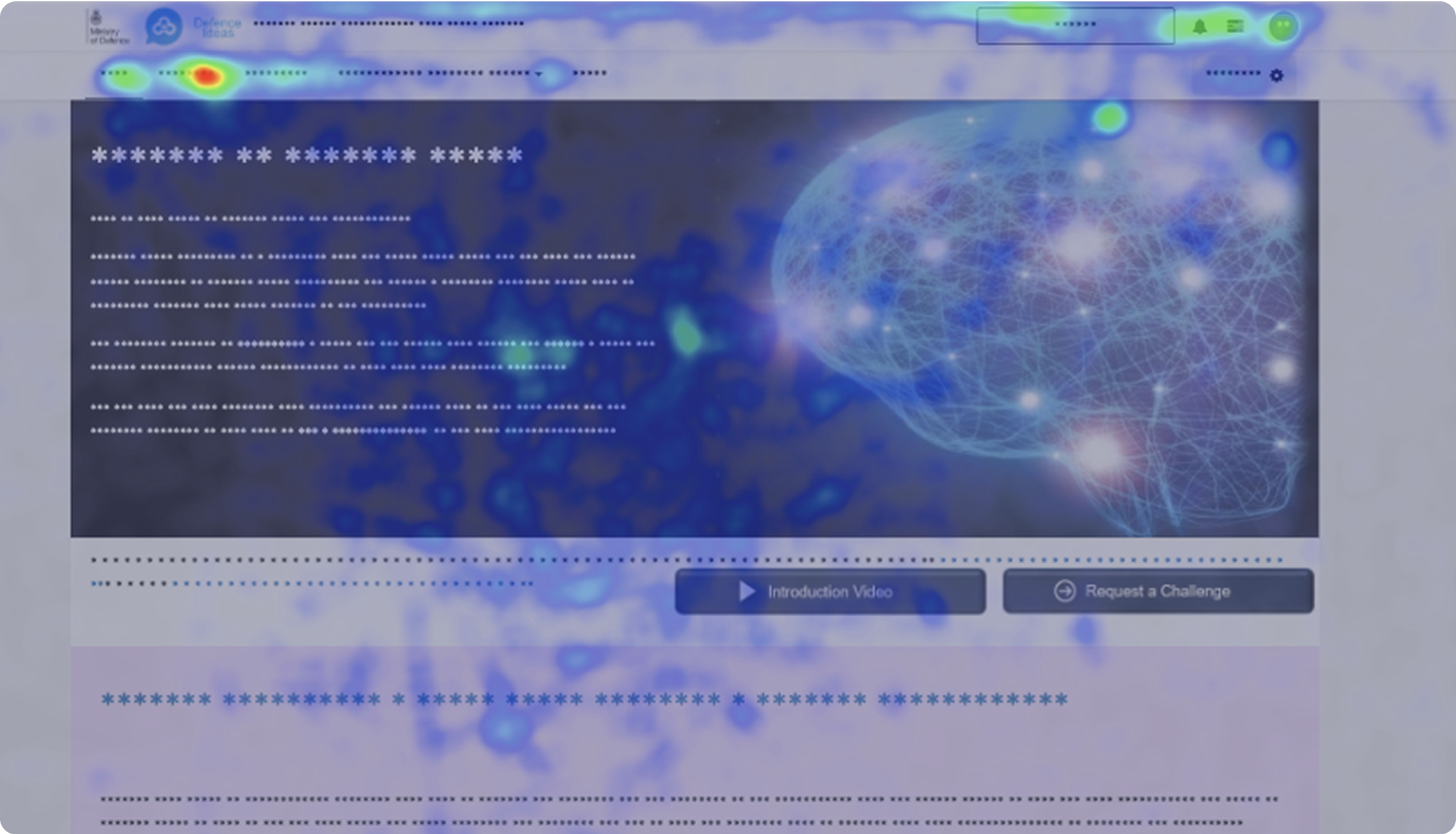

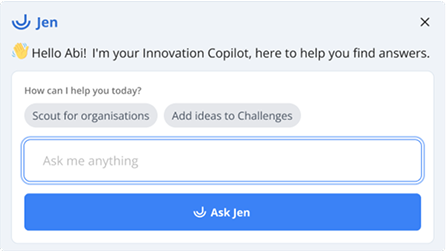

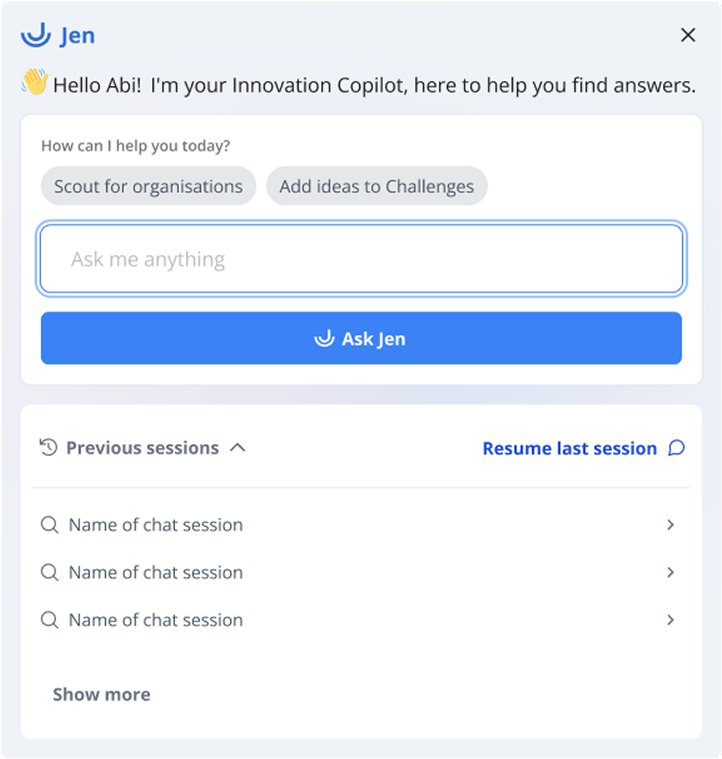

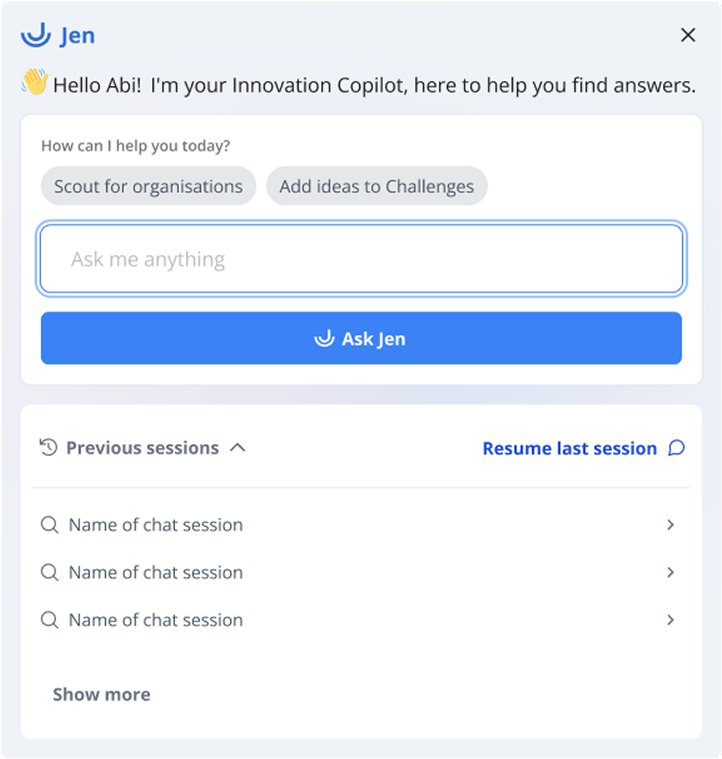

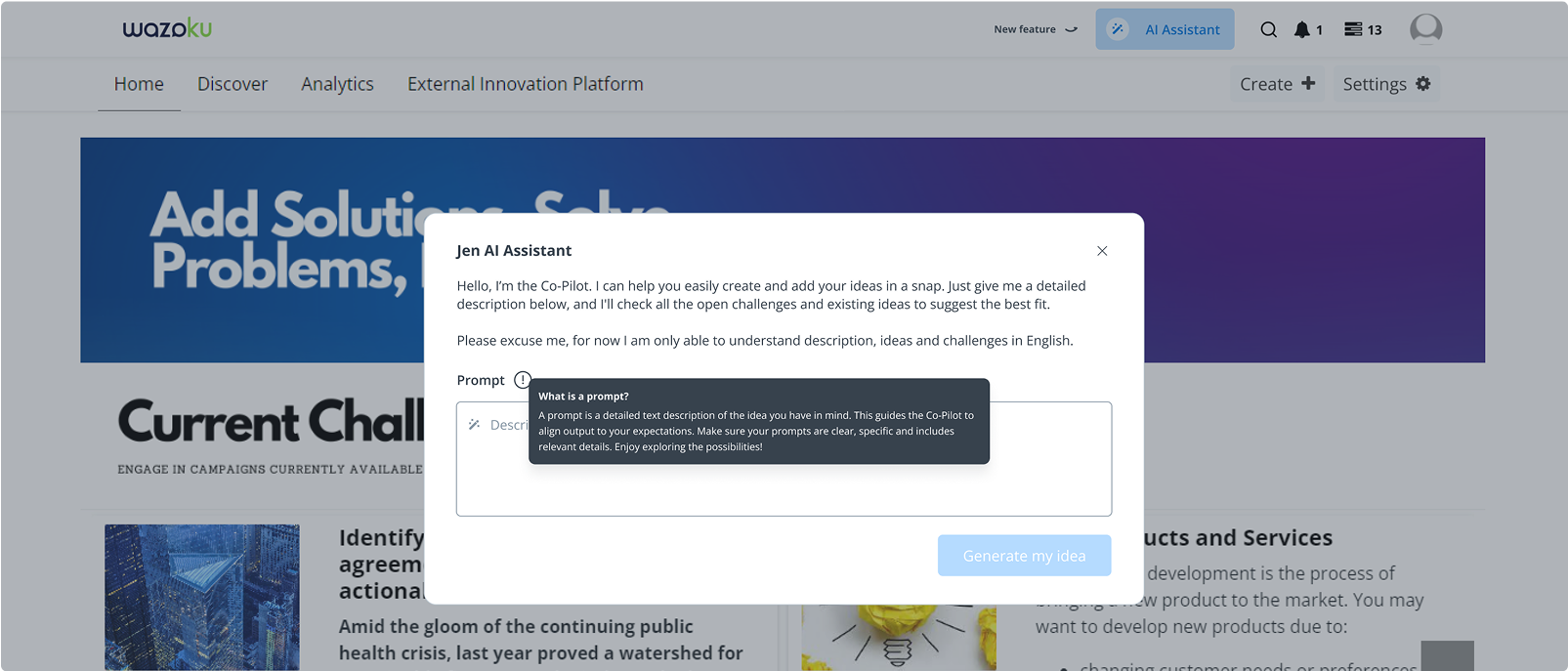

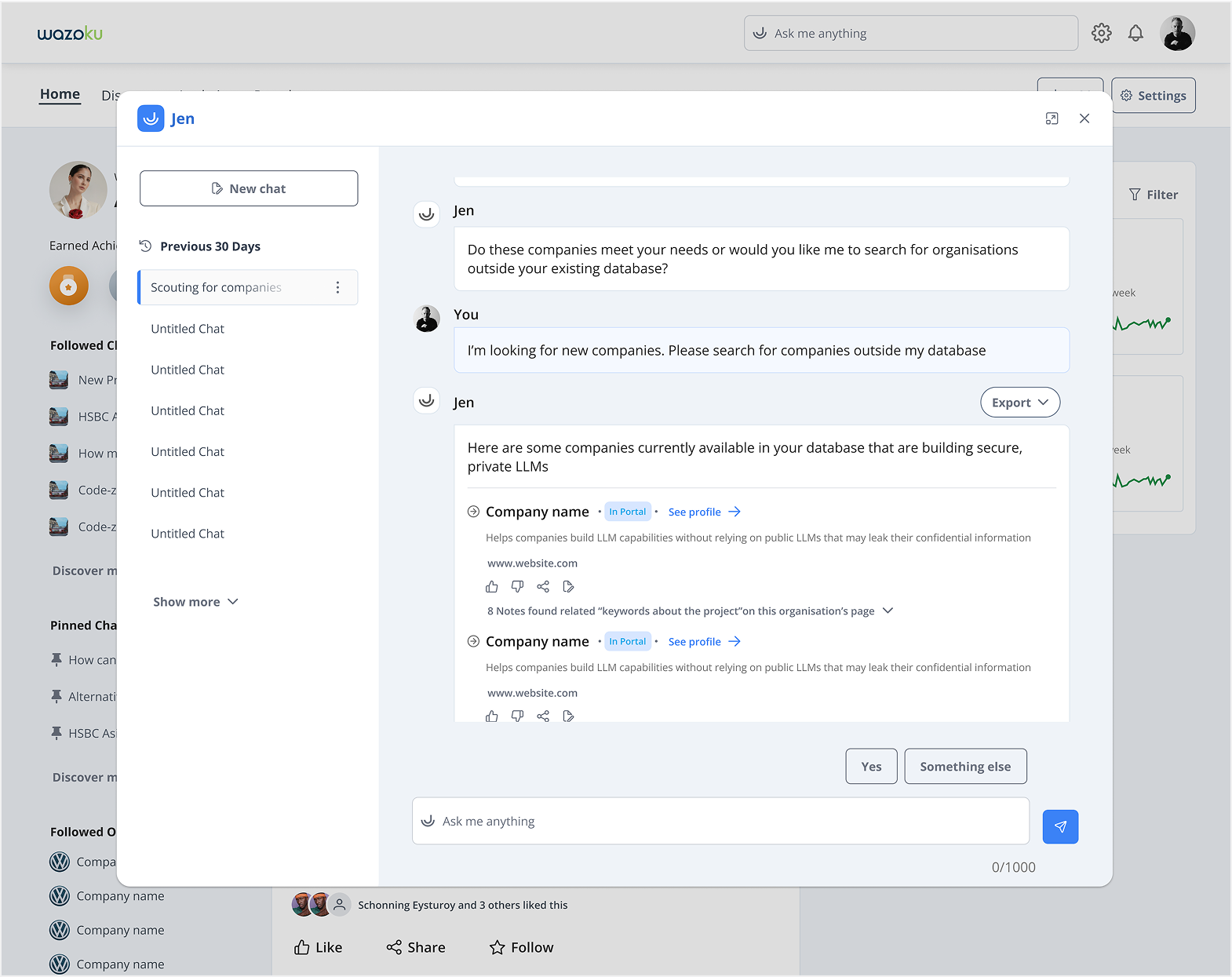

With a well mapped mental model, I defined the entry point of Jen through heat map analysis

Heatmap data revealed high user activity around the navigation bar and search area, which informed my decision to position Jen on the homepage for greater visibility and easier discoverability.

I tested different concepts and gathered feedback from users based on clarity of task, usability of solutions and friction points

User testing revealed that the modal design flow strongly resonated with users, improving discoverability with an average time of just 3.5 seconds from landing on the homepage to engaging with key features.

I tested different concepts and gathered feedback from users based on clarity of task, usability of solutions and friction points

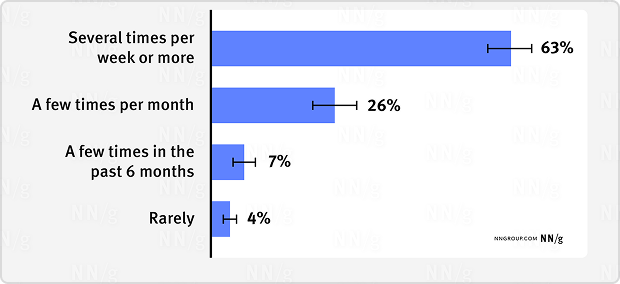

During beta testing, we collaborated closely with early adopters of Copilot to gather feedback and iterate quickly. This led to a noticeable increase in engagement per page view - 85% of users spent more time interacting with Jen, scouting partners, and contributing ideas, compared to the traditional approach.

Adopting the new scouting process significantly increased user engagement compared to the traditional approach. Enterprise users, in particular, spent an average of 3.8 minutes assigning ideas to challenges and 9 minutes in scouting —indicating a higher level of interaction. This shift led to a decline in traditional idea creation and scouting, as users showed a clear preference for using the AI assistant to generate and assign ideas.